Common sense prioritisation

Stop fooling thyself with scoring spreadsheets, just fix problems

Timo here. I write about the slower Product Discovery topics. The essentials, not the latest thing; the internet already has plenty of those.

Figuring things out as I go, 100% written by a human (me), with a new post every now and then.

The midwit meme. It captures a pattern that you can apply to many things:

You start simple, you overcomplicate things as you learn more, then eventually come back to simple (but with better reasoning).

I am the midwit (the person in the middle) when it comes to prioritisation, but I’m beginning to find that “fix problems” might be the better option.

1) I’ve tried a whole bunch of frameworks

At the core, I do prioritisation to achieve two things:

Help decide and align on what to do first. Something beyond “because I said so”, because that hardly works when working together with other people.

Predict success that the thing I choose to do first, delivers more value relatively consistently over the other things that now have to wait

I went through my archives and found some spreadsheets I’ve used over the last years. Very vintage:

PIE

Can’t remember which client this was but the design and A/B testing focus tells me this was at least 6+ years ago.

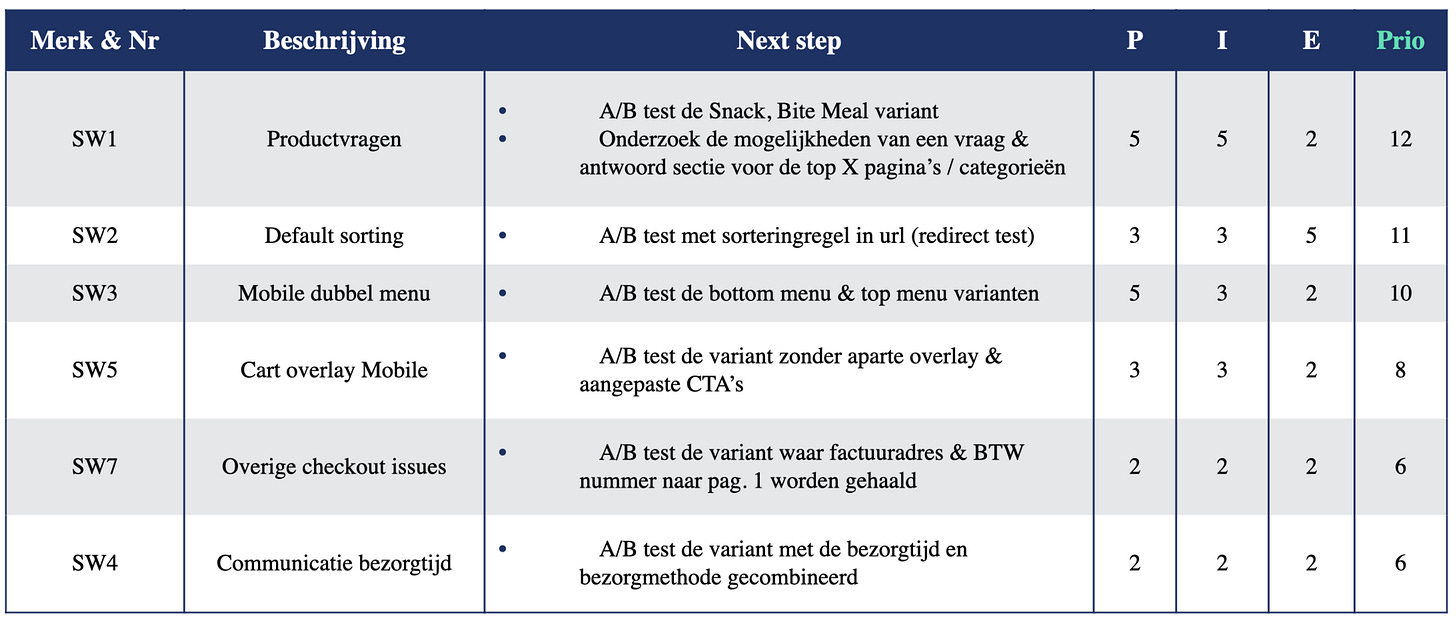

PXL

This was the PXL framework for a non-profit. PXL does the same as PIE/ICE/RICE but attempts to make the scoring less arbitrary.

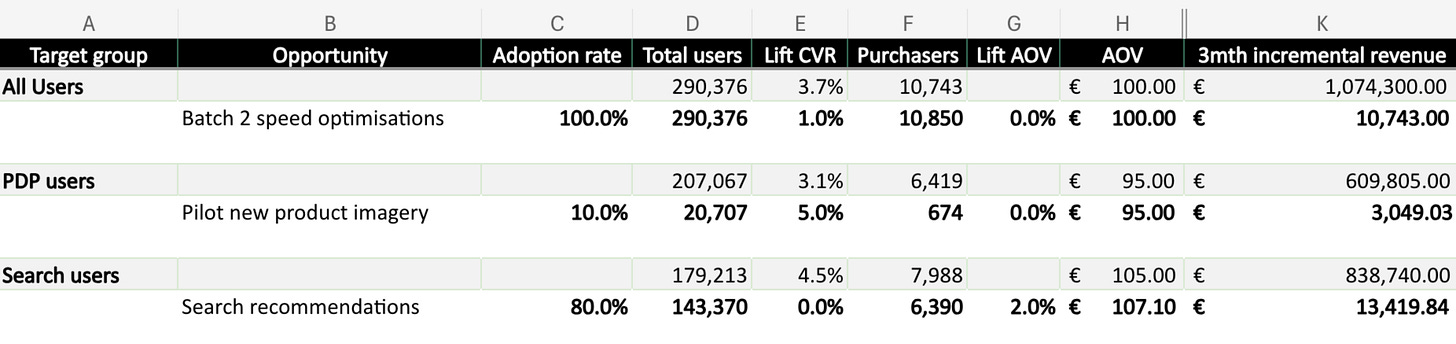

Potential revenue impact

This felt like a big upgrade. I could show the potential revenue impact for each opportunity. Essentially it’s the same as the other ones but with an extra € step.

God knows how I came up with those lift in Conversion Rate (CVR) percentages.

This is just a selection of the things I’ve tried. There are a MANY other prio frameworks out there.

2) Why they don’t work for me and feel like a 🤡 when using them

If I’m being REALLY honest, none of these frameworks have been super helpful.

1) They don’t help decide and align

The sense of rigour and control these frameworks give might work in a perfect synthetic world where everything is controlled and life doesn’t happen.

I might score an initiative a 10/10 and prioritise it, but I’ve found that life always happens. It’s more the norm than the exception.

Here’s a short list I’ve kept over the last few months of the things that actually decided what was prioritised (life happening):

Because the engineer that was required was pulled into a different project, so we had to change plans to properly utilise the available capacity

Because leadership said so

Because a stakeholder needed a favour in return for something I needed a couple of months back

Because a competitor just launched this new feature so now there’s panic in the management team and we needed to have it too.

Because a different new thing was raised and everyone agreed that it was an obvious problem with an obvious solution; it overruled the scoring framework

Whenever these things happened the scoring was discarded instantly. Didn’t matter if I used PIE/ICE/PXL or whatever. The spreadsheet wasn’t going to beat any of these things, and these things happen constantly.

Lack of maturity on my end and / or in the organisation? Absolutely. But I’m going to confidently assume it’s reality for more people than just me.

2) They don’t predict success

But there isn’t always something in the way. Sometimes leadership agrees, stakeholders don’t need any favours and things go exactly as planned.

I can proceed with my neat little scoring spreadsheet with a sense of control and rigour.

But here’s my problem with these things:

It accepts guessing. I should fill in a ?/10 if I’m unsure, which should prompt me to go back and test my assumptions first. In practice I start guessing a 6/10 or 7/10 and the frameworks just accepts it. The more complex the framework the more in control I feel, but that feeling is an illusion. That’s worse than just acknowledging I don’t know and go from there.

The scoring is arbitrary. How do I differentiate between a 6/10 and 7/10? Yet that tiny difference is exactly what determines what goes now and what goes next. I’ve also seen 6/10 features have much more impact than 7/10 features, multiple times*

They’re useless when it’s obvious. The times when I didn’t struggle is when I confidently filled in 10/10s across the board, but those features never needed a prioritisation matrix in the first place. It’s the mushy middle where I need guidance.

The framework adds no value in any scenario. It either accepts my guessing, struggles with the mushy middle where I need it most and things are uncertain and ambiguous, or confirms what was already obvious 🤡.

*Admittedly I don’t have any long term data about this, I should keep track of that sometime.

3) What did work for me: ditching the spreadsheets

So spreadsheets aren’t super helpful for me. But prioritisation in itself isn’t useless; I still need to decide what goes first and predict what will work.

Not everything we prioritised was pushed back by real life happening, or failed to show an impact. There were times when those two boxes were ticked quite smoothly.

Not effortlessly, but without too much pushback and with satisfactory outcomes: we expected wins and got them fairly consistently.

We didn’t use a prioritisation framework, because there was no need.

That happened when we had obvious solutions for obvious problems.

What do I mean with those?

Problems so clearly defined that anyone can see why they matter. Simple language, strong evidence that the problem is real, and a validated solution lined up that is targeted at that specific problem.

When both the problem and solution are obvious, there is no need for a framework because there is no ambiguity to resolve. Scoring spreadsheets try to combat ambiguity and create certainty through scoring, but the certainty comes from the evidence, not a number from a formula.

Some people call these “no brainers”, but I think they’re actually “max brainers” because it requires hard discovery work for something to be the simple, obvious choice.

Example

A market research company I worked with a while back had a whole list of ideas with no clear prio. I found the messaging throughout the lead site extremely vague (common issue with B2B) so I ran a quick user test to gauge messaging clarity on the most popular landing pages.The results were terrible. When asked what they thought the site was about, 3 got it right, 19 were in the right direction (I was being very liberal) and 28 were flat out wrong (with my fav answers being “Beauty services” and “Not sure: was this an investing site?”). This was a market research company.

We ideated some new directions and ran the same test. Clarity improved ofc (else I wouldn’t have picked this example), but the thing that stood out was the ease with which the existing ideas were discarded to take on this new obvious problem.

Doing the discovery work to make the problem and solution obvious helps with both of my prioritisation goals.

(”Make the problem obvious” sounds easier than it is. It requires synthesising insights into opportunities / problems which is its own skill: check out the separate post.)

1) It decides and aligns

The framework to choose a framework article I shared earlier has this nice paragraph:

“The biggest value of the frameworks above is the ability to create a forum for conversation, a place of psychological safety, and a frame for creativity. The outcome should be less about the list of features you decide to create, and more about having a dialogue for the right internal and external stakeholders to voice what they think matters the most and why.”

I agree about the forum for conversation. But in practice, I’ve found that making sure there’s a shared understanding about the problem - so making sure the same data is available and understandable for everyone - makes this so much more productive (and fun) than talking about numbers from a scoring spreadsheet.

The data from the example showing that only 3/50 understood the messaging, and that it improved in the variant made the problem and solution obvious. That’s what got everyone to align on the prio.

I still can’t control when life happens, but I found that challenging life’s events (e.g. leadership call, shift in capacity etc.) is much easier with this data.

2) It predicts success (better than guessing numbers)

By the time we prioritised fixing the messaging, the discovery work that led up to that decision already:

proved that the problem was real (only 3/50 got it right)

made sure we would hit a substantial group (most popular landing pages)

had a validated solution lined up (clarity improved in the follow-up test)

It was still a bet - everything is - but it made it much less of a prediction game. Instead of entering random numbers in the scoring spreadsheet, we already had a good feel for Reach, Impact and Confidence through the discovery work required to make the problem obvious.

Maybe that’s my problem with these things: a framework is not a bad thing in itself. There is nothing wrong thinking about factors like Reach, Impact or Confidence.

It’s just that these answers come from doing the hard discovery work, not from filling in the spreadsheet. THAT is where the effort should go when prioritisation is hard.

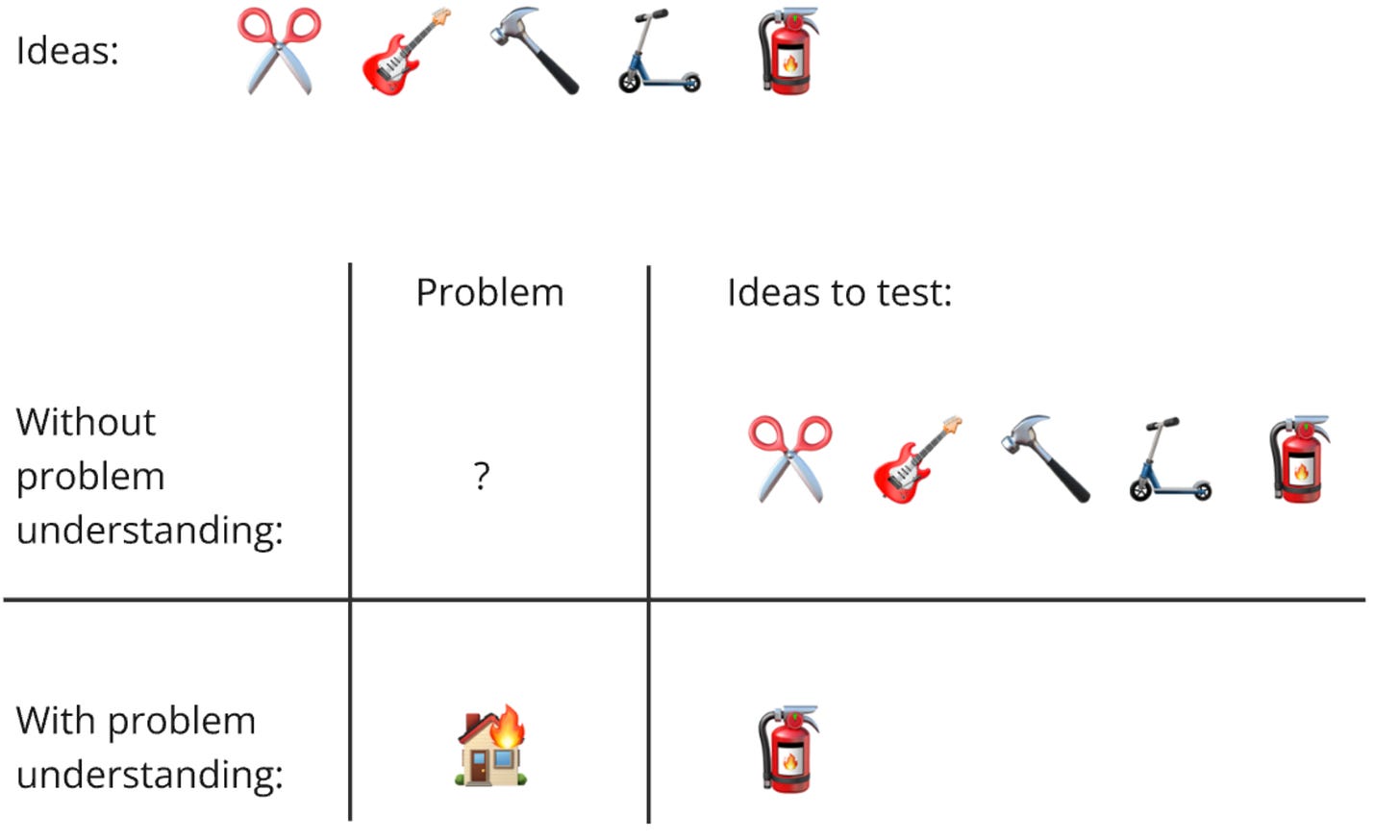

Borrowing a visual from my first post about the misuse of AB testing, maybe tinkering with framework and scoring spreadsheets, is yet another sign that you’re not doing enough Product Discovery work.

If you found the house is on fire, you don’t need a framework to tell you to prioritise the extinguisher. The discovery work already made that common sense.

Summary: fix problems

Maybe I just haven’t found the right one, but scoring ideas via prioritisation frameworks makes me feel like a 🤡

They don’t actually help align and decide: life happens + I found stakeholders align more easily around data about the problem than numbers from a scoring spreadsheet.

They don’t actually predict success; the scoring is arbitrary, I fool myself and fill in a 6/10 instead of ?/10 when I don’t know, it struggles with the mushy middle where I need it most or it confirms what was already obvious

I’m shelving prioritisation frameworks: I should spend my efforts on discovery work, finding obvious problems with obvious solutions. Certainty comes from the evidence itself, not from numbers in a formula

Personal reminder for the future: if prioritisation is hard and I find myself tinkering with frameworks and scoring spreadsheets, perhaps it’s a sign I need more product discovery. The research will make prioritisation common sense: fix problems.